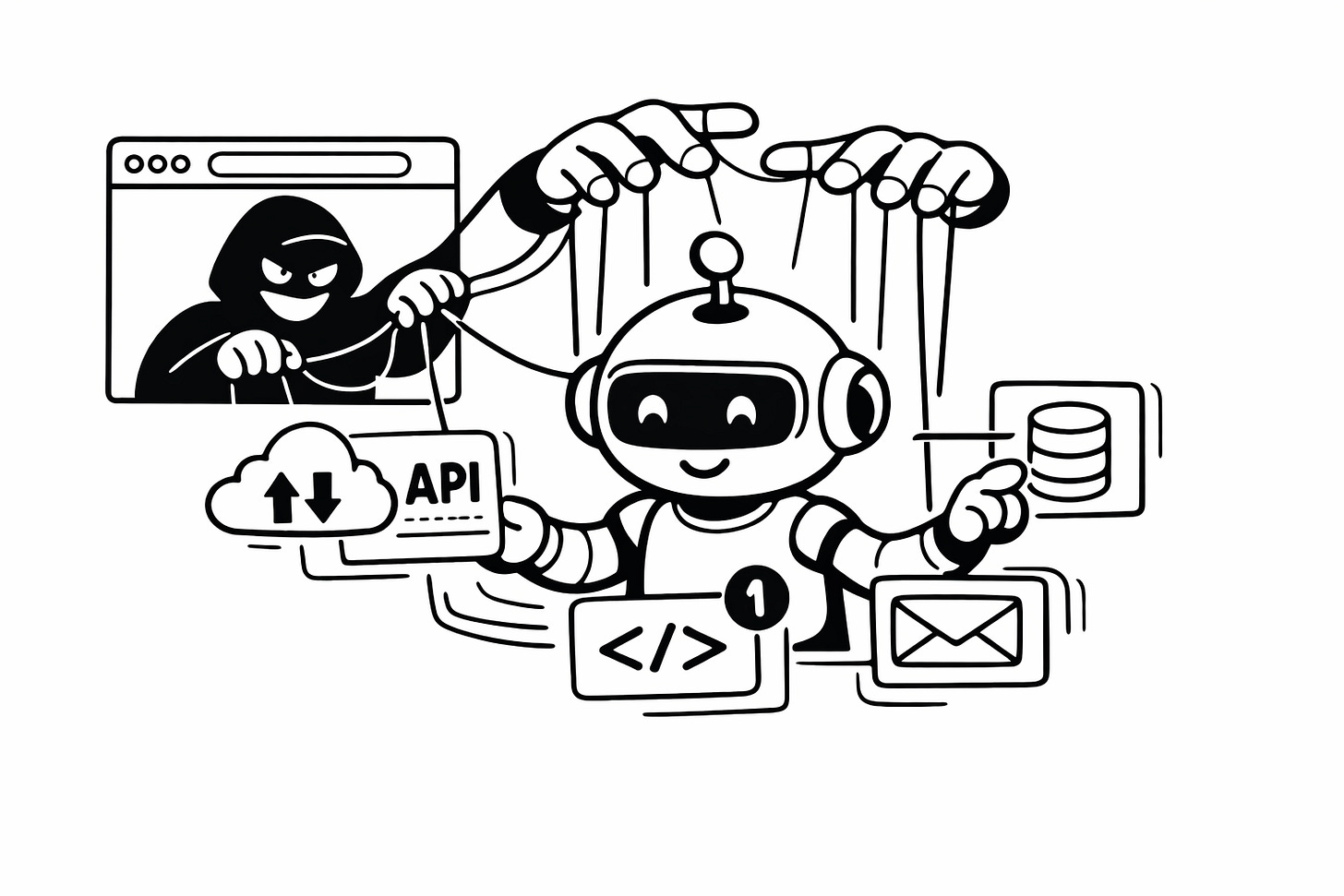

ClawJacked highlights the increasing security risks associated with AI agents

We’ve entered the age of agentic AI, systems that don’t just think but act.

Modern AI agents can talk to APIs, call tools, move data, create sub-agents, and even perform tasks on behalf of users. Every new capability increases what these systems can do. But it also increases the number of ways attackers can exploit them.

A vulnerability known as ClawJacked offers a clear example of why the security risks around AI agents deserve closer attention.

A security weakness in cloud development environments

ClawJacked is a security weakness affecting certain browser-based cloud development environments. The issue stems from how authentication tokens are stored and accessed during active development sessions.

In a typical attack scenario, a developer visits a malicious website while logged into a vulnerable cloud development environment. Through carefully crafted cross-origin interactions, the attacker can trick the browser into leaking authentication tokens associated with the active development session.

Once these tokens are obtained, attackers may be able to:

Access private source code repositories

Interact with cloud APIs

Modify development environments

Potentially pivot into broader cloud infrastructure

The attack does not require installing software or malware. Instead, it relies entirely on browser session behaviours and token handling mechanisms, which can be exploited through techniques such as session hijacking or cross-site scripting.

In many ways, this vulnerability does not fundamentally change the threat model. Security professionals have long been sceptical about giving AI agents access to vast environments. However, ClawJacked highlights that many users are already doing exactly that and that it can quickly lead to serious trouble.

When AI agents have too much access

One of the core problems with AI agents is the amount of information they often have access to.

Agents frequently operate with large volumes of contextual data that are difficult for humans to fully oversee. At the same time, many users grant these systems far more privileges than they should.

As a result, an AI agent may have access to sensitive resources such as:

API keys

Login credentials

Critical internal documents

Access to financial systems such as bank accounts

If an attacker hijacks an agent with this level of access, the damage can extend far beyond the original system.

For example, if the agent has access to a user’s contact list or email history, a breach could place everyone in that network at risk. Past emails could also allow attackers to recreate the user’s writing style, making it easier to impersonate them and target colleagues, friends, or family members.

Safer ways to run AI agents

Because of these risks, running AI agent frameworks directly on personal or corporate machines is not recommended. AI agent frameworks are software systems designed to perform tasks autonomously using artificial intelligence.

Instead, systems like OpenClaw should be operated in controlled environments. One approach is to run them inside a Docker container on a separate server. This helps limit the damage if the agent or its environment becomes compromised.

Isolation and careful control over the environment are important steps toward reducing risk.

Using zero trust with AI agents

To secure these systems, many experts argue that organisations need to apply the Zero Trust principles.

The core idea behind Zero Trust is simple: never trust by default, always verify.

Instead of granting broad access “just in case,”, permissions should follow a just-in-time approach. Systems receive access only when it is needed and for the time it is required. This preserves the principle of least privilege, ensuring that entities only have the permissions necessary to perform specific tasks.

Another important shift is moving away from perimeter-based security. Rather than relying on a single protective boundary around a system, security controls should exist throughout the entire environment.

Perhaps the most important principle is the assumption of breach. Systems should be designed with the expectation that attackers may already be inside the network, database, or application. Security architecture should reflect that reality.

Treat autonomous agents as experimental

For now, organisations should treat fully autonomous AI agents, which are systems that can operate independently without human intervention, as experimental technology.

Companies should educate staff about the dangers of AI agents, particularly when it comes to permissions and access control. Excessive privileges granted to agents can pose significant security risks in the event of a system compromise, as they may allow unauthorised access to sensitive or critical systems, leading to potential data breaches or operational disruptions.

ClawJacked illustrates how seemingly small weaknesses can have large consequences when powerful automated systems are involved.

Agentic AI multiplies both power and risk. Zero Trust provides a framework for keeping that power under control.

Every agent must prove who it is, justify what it wants to access, and continuously earn trust. Only then can organisations safely harness the capabilities of autonomous systems without exposing them to potential attackers.

Get a quote for your project, schedule a call with our team, follow us on X, and sign up for our newsletter for simplified and curated Web3 security insights.